HDMO’s Election Monitoring: a Summary

During the Hungarian parliamentary election campaign, HDMO not only informed the public about online behaviors that could undermine the integrity of the election, but also alerted Very Large Online Platforms (VLOPs) to potential violations of their platform rules. The two main dimensions of our reporting activity have been: political advertisements and inauthentic coordinated online behavior.

HDMO now publishes the reports of the contributing organizations, Political Capital and Lakmusz, which supported the Rapid Response System by alerting platforms to potential violations of platform policies or DSA regulations. The Rapid Response System was activated by the signatories to the Code of Conduct on Disinformation ahead of the April 12 election. Although HDMO is not a signatory to the Code of Conduct on Disinformation, it automatically participated in the Rapid Response System as the Hungarian hub of the European Digital Media Observatory (EDMO).

Political Capital

Within the RRS mechanism, Political Capital focused on two main areas: political advertising on social media and coordinated networks of inauthentic Facebook accounts. Among platforms, we concentrated on Meta, which played a decisive role during the election campaign.

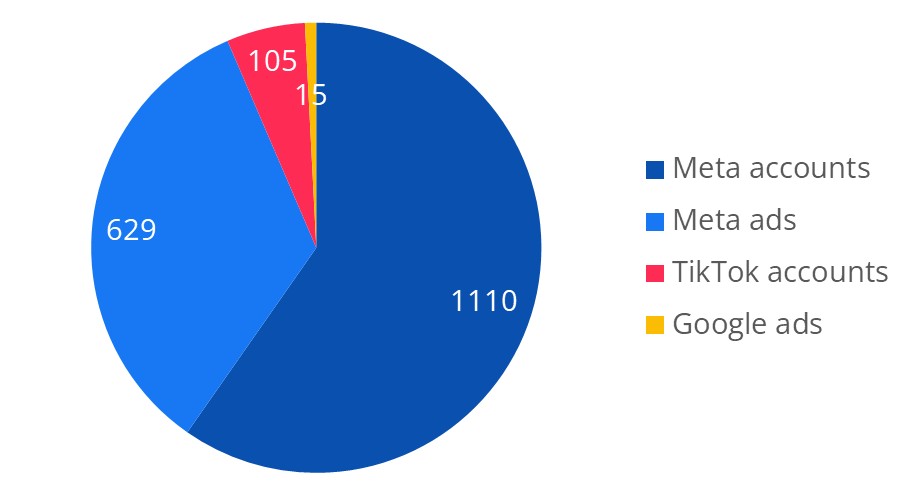

Distribution of contents reported by Political Capital

Meta

In total, 1,739 pieces of content were reported on Meta’s platform, including 1,110 profiles and 629 advertisements.

Meta removed 82% of the reported profiles and 61% of the reported advertisements. The majority of the ads that remained online could be linked to a single actor that effectively circumvented Meta’s filtering mechanisms. These ads displayed the politician’s logo while avoiding direct visual identification of the individual.

Overall, Meta removed 73% of the content reported to the platform.

Meta’s response time was typically relatively fast: reported content was typically reviewed within one day. Although the company indicated that it operates on a 24/7 basis, we observed no activity over weekends. Content reported during weekends was only processed on the following Monday. The election weekend was an exception, during which responses were significantly faster, often within 1–2 hours.

A total of 15 advertisements were reported to Google via email. No written responses were received; as clarified during follow-up discussions, such responses would have needed to be explicitly requested. Google did not remove any of the reported advertisements. These ads promoted the government’s “National Petition” campaign. Substantially identical advertisements were also running on Facebook; these were reported to Meta and subsequently removed. This case highlights the divergence between Meta’s and Google’s advertising policies.

TikTok

Regarding TikTok, we reported a network of 105 accounts identified by Context, the Romanian partner of the FACT Hub. TikTok did not detect any current violations regarding the activity of the accounts, and on TikTok’s reporting interface (TSET), reports appeared to be closed very quickly. However, our reports were placed under further examination, even though it was unclear what, if any, further investigative steps were undertaken.

Removal rate of contents reported by Political Capital

Lakmusz

Lakmusz also focused its flagging efforts on political ads and fake profiles.

Overall, we flagged 86 urls to Meta: 50 political ads, 31 fake profiles identified in our earlier investigation that were still active, two Facebook-pages exclusively created for promoting content from Russia-linked fake news sites, and three Facebook-pages exclusively created for putting out realistic AI-generated videos of political figures without adequate labelling.

Of the 86 urls, Meta removed 66, so our success rate was above 75 percent. The platform took no action on one of the page publishing AI videos (although the page is now unavailable) and on 19 political ads.

We found that on political ads Meta sometimes disagreed with our assessment when the link between the ad and the election was not made explicit, however, it was rather obvious for a Hungarian speaker knowing the context that the ad was designed to influence voters.

About the pages publishing AI-generated videos, it’s worth mentioning that in our notice we referred to Meta’s manipulated media policy, which states that Meta might put an informative label on content that was digitally created or altered and may mislead people. However, in practice, they didn’t apply any labels but completely removed two of the pages, while taking no action on the third.

Kövess minket!

Kövesd a HDMO munkáját már közösségi felületeinken is, hogy ne maradj le a legfrissebb híreinkről!